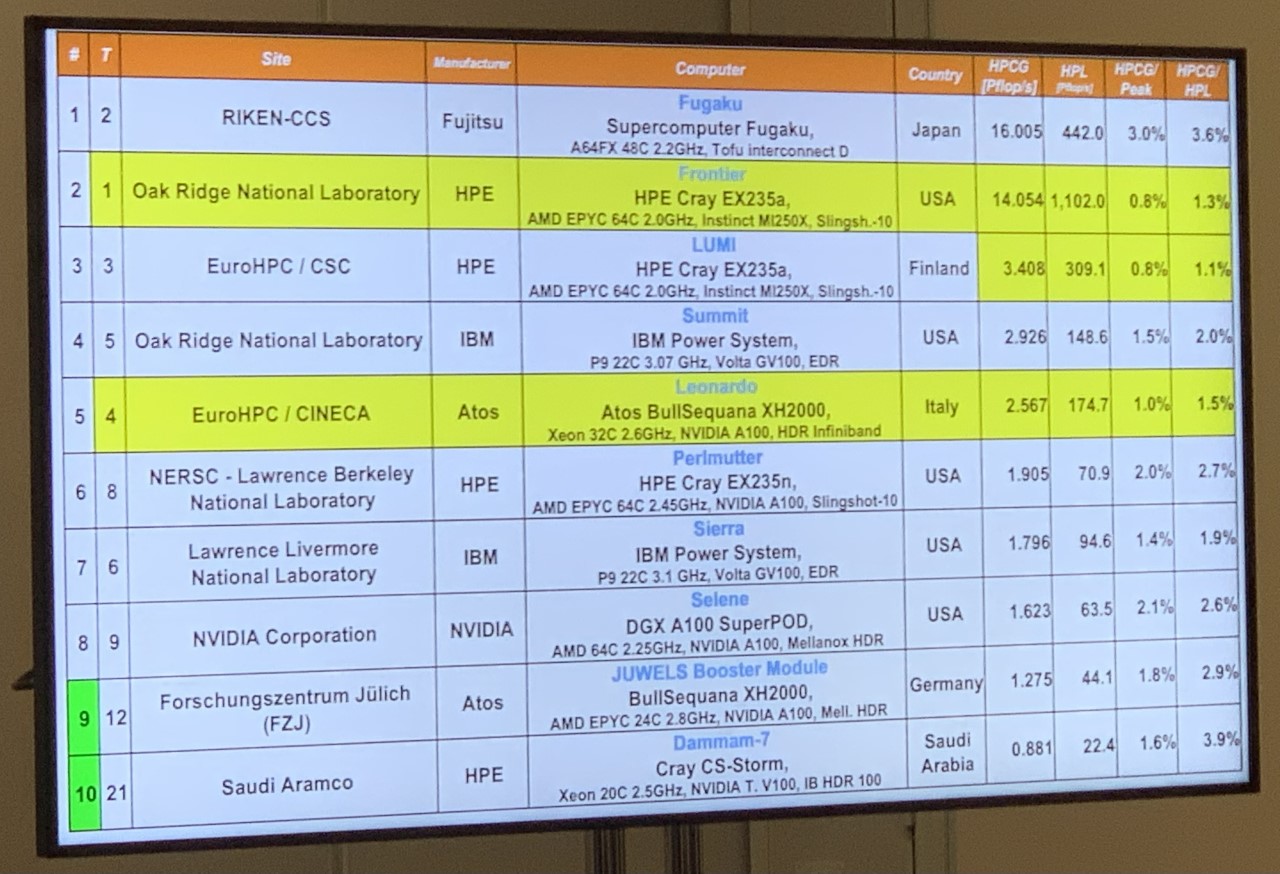

The 60th edition of the Top500 list, revealed today at SC22 in Dallas, Texas, showcases many of the same systems as the previous installment, with Frontier still out in front as the first official Linpack exascaler, clocking 1.102 exaflops. Installed at Oak Ridge National Laboratory, Frontier – a collaboration of the DOE, HPE and AMD – comprises 74 HPE Cray EX cabinets, housing 9,408 nodes, each equipped with one AMD Milan “Trento” Epyc CPU and four AMD Instinct MI250X GPUs.

Frontier also scored highest on the HPL-MxP benchmark with 7.9 exaflops. A companion benchmark to the Top500, HPL-MxP was formerly known as HPL-AI. The benchmark “seeks to highlight the convergence of HPC and artificial intelligence (AI) workloads based on machine learning and deep learning by solving a system of linear equations using novel, mixed-precision algorithms that exploit modern hardware,” according to the backers.

Lumi (#3 Top500 system) had the second highest HPL-MxP with 2.2 exaflops, followed by the Fugaku supercomputer (still in 2nd place on the Top500) which moved from its previous number one spot into third position with 2.0 exaflops.

Fugaku retained its number one spot on the HPCG (High Performance Conjugate Gradients) benchmark with 16 petaflop, 3% out of peak. Frontier came in second place with a score of 14 petaflops, which was only .8 percent of peak.

“That low efficiency to peak is an indication that the machine struggles with data movement compared to floating point,” said Jack Dongarra, Top500 coauthor and progenitor of the High Performance Linpack benchmark, in an interview with HPCwire.

“While HPL is compute-intensive, HPCG is a data movement benchmark. It’s trying to capture something about 3D partial differential equations,” said Dongarra. “[These systems] don’t do data movement as well as they should. I think [that is] highlighting the weakness of these machines and allowing us to, I hope, use that information in designing the next generation.”

He gives credit to the projects that are participating in the HPCG project. “You can portray this like a race car. If you had a race car that was capable of going 200 miles an hour, and then it gets two miles an hour, you’re not gonna be very happy with that rate.”

There is only one new entrant in the top 10 cohort: EuroHPC’s Leonardo supercomputer, which landed on the list in fourth position with 174.70 Linpack petaflops out of 255.75 theoretical peak petaflops. The Italian system, housed at CINECA and built by Atos, comprises more than 3,000 Nvidia HGX nodes, each equipped with four Nvidia A100 GPUs (the 40 GB variant), and a single Intel Ice Lake CPU. The water-cooled nodes are connected via Nvidia Mellanox HDR 200Gb/s InfiniBand networking.

Leonardo is the sixth EuroHPC system to be stood up. Two are outstanding: Deucalion, a Fujitsu-Arm system to be sited in Portugal, had been expected by the end of this year, and MareNostrum-5 – a Lenovo-Atos collaboration with the Barcelona Supercomputing Center – which is said to be coming next year.

As expected, Finnish supercomputer Lumi significantly expanded its computational footprint, doubling its previous score from 151.9 petaflops to 309.1 Linpack petaflops. Lumi boasts a green datacenter, thanks to its use of 100 percent renewable energy (hydropower). Its waste heat will be used to supply approximately 20 percent of the yearly district heating needs of its host town, resulting in a stated net negative carbon footprint of 13,500 tons of CO2 equivalent per year.

Green500 Welcomes ‘Henri’

The Green500, meanwhile, is host to an even bigger showing from Frontier-style systems. May’s Green500 list had four such systems in the first four places: respectively, Frontier TDS (the test and development system for Frontier); Frontier itself; EuroHPC’s LUMI system; and GENCI’s Adastra system. All four of those HPE-built systems used AMD Epyc “Milan” CPUs, AMD Instinct MI250X GPUs and Slingshot 11 networking in the same configuration.

This year, those four systems are joined by the similarly-architectured GPU partition of Pawsey’s Setonix system and the GPU partition of KTH’s Dardel system. Collectively, those systems – in order, Frontier TDS, Adastra, Setonix GPU, Dardel GPU, Frontier and LUMI – now occupy 2nd place through 7th place on the November 2022 Green500 list.

The French system Adastra (an HPE-AMD system) increased its energy-efficiency to 58.02 gigaflops per watt, moving up one spot into third position. Adastra ranks #11 on the new Top500 list with a Linpack score of 46.10 petaflops.

In first place: a shot across the bow from Nvidia’s H100 GPU, courtesy of a small system named Henri at the Flatiron Institute in New York. Henri holds a #405 ranking on the Top500. Henri provides just 2.04 Linpack petaflops, out of a theoretical peak of 5.42 petaflops, giving it an unusually low Linpack efficiency of 37.6%.

Henri boasts a whopping 65.1 gigaflops per watt, besting Frontier TDS’ 62.7 gigaflops per watt. It’s an interesting showing from Nvidia, who found themselves thoroughly ousted from a few of the top Green500 spots when the Frontier-style nodes took the stage.

Henri is based on Lenovo’s ThinkSystem SR670 V2 platform, with Intel Xeon Platinum 8362 2800Mhz (32-cores), the aforementioned H100 80GB PCIe cards and InfiniBand HDR.

“It looks like this was rushed so they could pass the test,” said Jack Dongarra in an interview. “Machines usually get between 70 and 85% of the peak, that’s respectable for HPL. So when you score so low, something’s not right. But it should leave room for further optimizations.”

Recall too that the H100 here is being paired with the older Ice Lake chips. The full capability of the platform won’t be available until it is paired with either a (forthcoming) Intel Sapphire Rapids or a (now-shipping) AMD Genoa CPU, which have support for PCIe Gen5 and CXL.

Other Trends

There are 41 new systems on the list and 27 are from Lenovo, with most of those (aside from three Flatiron Systems, including Henri) being cloud/hyperscale systems that have been creatively carved up to maximize total listings.

Dongarra acknowledged that not all the systems are being used for scientific computing and said that one way to get a better sense of the real HPC machines is to look at the top 100 or 200 systems on the list. Erich Strohmaier does a nice job surfacing this segmentation in his Top500 BOF talk (to be held on Tuesday), analyzing the research versus the commercial groupings.

There are two new Chinese systems on the list, made by Lenovo. The country now has a total of 162 systems on the list, down from 173 on the last edition, as a result of nine systems falling off the list. China also has at least two exascale systems that were revevaled as part of last year’s Gordon Bell Prize papers, that were kept off the list, likely due to ongoing geopolitical tensions between U.S. and China.

The entry point to the list is now 1.73 Linpack petaflops, and the current 500th-place system on the list held 460th place ranking on the previous (May) list, which was detailed at ISC in Hamburg. The aggregate Linpack performance provided by all 500 systems on the 60th edition of the Top500 list is 4.86 exaflops, up from 4.40 exaflops ~six months ago and 3.06 exaflops 12 months ago. The entry point for the Top100 segment increased to 5.78 petaflops up from 5.39 petaflops ~six months ago and 4.12 petaflops a year ago.