The Role of Containers in Alzheimer’s Disease Research in the Ebbert Lab

Navigating the complexities of scientific research often involves juggling large data sets, multiple tools, and specialized software–especially in the realm of high performance computing (HPC). In an environment where the lack of reproducibility in scientific studies is a growing concern, tools that enhance accuracy and consistency are invaluable. In the Ebbert Lab, a part of the University of Kentucky’s Sanders-Brown Center on Aging, we’ve found that software containers are essential, not just for streamlining our workflows, but also for addressing the critical issue of reproducibility in our Alzheimer’s disease research. In this article, we will explore the challenges of achieving reliable and repeatable scientific results, our focus on Alzheimer’s disease research, and how software containers have improved both the efficiency and reproducibility of our work.

The genetic puzzle of Alzheimer’s disease

In the U.S. alone, approximately 6.07 million people aged 65 and older are living with Alzheimer’s disease.[1] If you have grandparents, parents, relatives, or friends who have been diagnosed with Alzheimer’s disease, then you know how the condition affects their memory and thinking abilities (i.e., cognition), making it difficult for them to remember precious memories, recognize loved ones, and eventually to complete daily tasks. This not only takes a toll on the health and happiness for them and their loved ones, but also on society at large, costing the United States economy a staggering $345 billion in 2020 alone.[2] Numerous studies suggest that genetics significantly influence susceptibility to Alzheimer’s disease, accounting for 60% to 80% of the risk factors.[3,4]

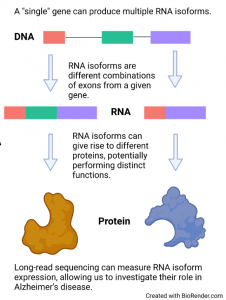

Recent research has identified 75 specific gene regions that could be linked to the risk of developing Alzheimer’s disease. However, identifying these risk genes is only part of the puzzle.[5] To truly understand their impact, we need to understand how these genes contribute to the disease. Most genes can produce multiple “versions” of themselves known as ‘RNA isoforms’, which then produce unique proteins (from a “single” gene) These unique isoforms (i.e., variations of the same gene) can have drastically different, sometimes even contradictory, roles in our bodies.[6] Traditional methods for studying genes, called ‘short-read sequencing,’ haven’t been able to capture these complexities. As a result, we still have much to learn about how these “single” gene variations affect our health, including their role in Alzheimer’s disease.

The Power of Long-Read Sequencing in RNA Research

In simpler terms, traditional techniques for studying genes, known as ‘short-read sequencing,’ can give us a general idea of a gene’s activity but don’t offer much detail. Imagine trying to understand a complex machine by only looking at it from a distance—you’ll get a basic idea, but you’ll miss out on the intricacies. That’s where ‘long-read sequencing’ comes in. These newer technologies allow us to closely examine individual variations of a gene, known as RNA isoforms. It’s like zooming in with a microscope to see the machine’s smaller, crucial components. In the Ebbert Lab, we’re using this advanced long-read sequencing from Oxford Nanopore Technologies to study these individual variations and their role in Alzheimer’s disease. Our goal is to uncover new insights that could pave the way for better diagnosis and treatment options.

Turning raw scientific data into valuable discoveries is a complex process. It involves many steps and uses more than 10 specialized software tools, along with hundreds of different pieces of programming code, known as ‘libraries.’ Managing all these components on a high-performance computing (HPC) server can get complicated very quickly. It’s like trying to keep track of a 1,000-piece puzzle where every piece is essential. This is where specialized tools like Sylabs and Singularity containers become invaluable. Think of them as organizational systems that help keep all these puzzle pieces in their proper places, making it much easier to see the bigger picture.

Streamlining workflows with containers

In the Ebbert Lab, and in collaboration with the University of Kentucky Center for Computational Sciences, we’ve optimized our software management through the use of Singularity containers. These containers encapsulate software applications and their dependencies, streamlining the installation process and resolving any potential conflicts between different tools. This is especially crucial in an HPC environment, where managing complex dependencies–especially across hundreds of users with unique needs–can become both cumbersome and a bottleneck. Additionally, Singularity’s security features align with the specific requirements of our computing cluster at the University of Kentucky, allowing us to maintain a secure computational infrastructure. But perhaps the most impactful application of Singularity containers lies in their integration into our bioinformatics pipelines, where they ensure both efficiency and reproducibility.

We use Nextflow, a domain-specific language designed for data flow and job management, to write our pipelines. Nextflow natively supports Singularity containers, which can be pulled from the Sylabs cloud, facilitating seamless pipeline execution. The ability to seamlessly transfer these containers to new systems, and share with the scientific community is essential to performing reproducible research, which has been a major challenge in the past. Even minor differences in software versions can make it impossible to reproduce the exact results from the original study, potentially creating confusion across the scientific community. Not having to worry about re-installing software when we want to use it on a different server or share it with a collaborator is a huge weight off our shoulders. This setup not only ensures we are always ready for data analysis, but also allows us to “freeze” or archive software versions used in published research, thereby enabling future reproducibility.

We employed this framework in our recent pre-print study titled “Using deep long-read RNAseq in Alzheimer’s disease brain to assess clinical relevance of RNA isoform diversity.” The study underscores the advantages of using long-read sequencing for understanding RNA isoforms in human diseases.

Expanding beyond Alzheimer’s research

Our experience demonstrates that Singularity containers and the Sylabs cloud solve many software installation and management issues within computational biology. This allows researchers to focus on what truly matters: conducting good and reproducible science that can help save and extend human lives. Moreover, our computational pipeline, built on Nextflow and Singularity, offers a ready-to-use resource for any researcher looking to explore RNA isoforms in human diseases.

By streamlining our workflows, simplifying software management, and ensuring scientific reproducibility, we are better equipped to tackle the multifaceted challenges of Alzheimer’s disease research. This approach has the potential to set a new standard for how computational biology research is conducted, enabling scientists to focus on their primary goal: advancing our understanding of diseases to improve human health. As we continue to unravel the role of RNA isoforms in Alzheimer’s disease and beyond, we’re excited about the possibilities that this technological framework offers for accelerating scientific discoveries.

Sources:

[1] Rajan, K. B. et al. Population estimate of people with clinical Alzheimer’s disease and mild cognitive impairment in the United States (2020-2060). Alzheimers Dement 17, 1966–1975 (2021).

[2] 2023 Alzheimer’s disease facts and figures. Alzheimer’s & Dementia 19, 1598–1695 (2023).

[3] Bergem, A. L., Engedal, K. & Kringlen, E. The role of heredity in late-onset Alzheimer disease and vascular dementia. A twin study. Arch Gen Psychiatry 54, 264–270 (1997).

[4] Gatz, M. et al. Role of genes and environments for explaining Alzheimer disease. Arch Gen Psychiatry 63, 168–174 (2006).

[5] Bellenguez, C. et al. New insights into the genetic etiology of Alzheimer’s disease and related dementias. Nat Genet 54, 412–436 (2022).

[6] Dou, Z. et al. Aberrant Bcl-x splicing in cancer: from molecular mechanism to therapeutic modulation. Journal of Experimental & Clinical Cancer Research 40, 194 (2021).