A recent article on Tom’s Hardware began with the headline “China Wants 300 ExaFLOPS of Compute Power by 2025.” Intrigued, further reading finds the following line, “China possesses an aggregated computational power of 197 ExaFLOPS.” These numbers did not make sense to me.

The current June 2023 Top500 list has a combined 5.24 ExaFLOPS. Knowing that China has not placed newer machines on the list for some time and that the list is only 500 machines, the 197 aggregate ExaFLOPS seemed too high. If, for example, we assume the Top500 list is an average snapshot of HPC machines, then the average performance per machine is 10.48 PetaFLOPS. Thus, one would need the order of 18K machines to get to an aggregate of 197 ExaFLOPS.

Coming at these numbers from a different angle, China wants to add approximately 100 ExaFLOPS by 2025. Using the cost of El Capitan, which is about $600 million for a two 2 ExaFlop machine, then the cost of China’s 100 ExaFLOPS come in at about $30 billion.

Clearly, these numbers don’t make any sense, and there needs to be something else going on with these aggregate ExaFLOPS numbers.

Input from Addison Snell of Intersect360 Research helps shed some light on these numbers. First, Addison pointed out that the first issue could be the definition of a FLOP. Traditionally, in HPC, a FLOP is assumed to be a double-precision operation; however, due to the growth of AI, this number could be based on single precision or mixed precision measurement.

Furthermore, Addison posts that the number is probably based on the hyperscale infrastructure and not the traditional HPC systems. He states that globally, hyperscale companies are responsible for half of all data center spending. These include companies like Tencent, Alibaba, and Baidu, whose infrastructure is predominantly in China. Based on HPC, AI, and hyperscale spending, it is possible that China is spending $20B per year on data center infrastructure. Even at a cost of $400 million per Exaflop, that would result in about 50 aggregate ExaFLOPS per year.

Finally, Addison mentions that Hyperscale doesn’t necessarily mean cloud. Many Hyperscalers (e.g., Google, AWS, Meta, Microsoft, Alibaba, Tencent, and Baidu) have massive AI supercomputers that could land rather high on the Top500 but have not submitted results.

Given Addison’s insight, the original numbers make more sense; the initial analysis offered above looked at aggregate ExaFLOPS through an on-prem double precision Top500 benchmark lens. In addition to HPC, many more sectors use FLOPS (whatever they may be) to measure performance. There is, however, a nagging issue about the terminology used when discussing HPC performance.

Achieved is not Aggregate

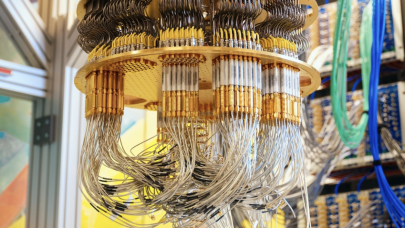

In HPC, when a machine is rated in terms of performance, the Top500 HPL benchmark is usually run. (We will skip the Top500 benchmark arguments and focus on its ability to measure achieved performance, and most importantly, we have a history of results across a myriad of hardware.) This benchmark requires the entire machine to report a double-precision FLOPS result. The HPC benchmark can run everything from a Raspberry Pi to the largest supercomputers. In addition to providing a performance benchmark, HPL also exercises the entire machine. For instance, if one node fails, the benchmark fails. The benchmark may fail or run exceptionally long if there is a network switch issue. An implied fitness exists in running HPL on any machine, particularly very large supercomputers.

The reported Top500 FLOPS results are an “achieved” number, which differs from an “aggregate” number. When HPC clustering began, there was a tendency to create “data sheet” clusters. For instance, the top performance of a cluster was rated by simply aggregating all the FLOPS for each individual server used for the cluster (same for storage performance). To the disappointment of some, the achievable FLOPS rating was often quite a bit lower.

There were even wild estimates of local system clusters. For instance, “If we combine all the desktops in our office, we can create a supercomputer!” Except depending on your local LAN speeds, the heterogeneous nature of desktop machines, and the algorithm you want to use, this goal was often easier said than done. Some applications can work in this environment; Folding@home is one example, where independent parts of a problem can be run, disaggregated, and collected as part of the whole solution. The nature of the problem and the parallel algorithm allow an aggregate approach to performance measures.

In the HPC community, when the FLOPS rating is used, it is normally assumed to be “achieved FLOPS” and not a simple aggregate. While the aggregate number often sounds impressive, it is lacking in context. Consider a car analogy. If we aggregate the horsepower needed for the Saturn V rocket (160 million) from a standard automobile (180), we get the equivalent of about 900K readily available automobiles. So why do we need to build rockets?

Most seasoned HPC users know that when you quote a FLOPS result, it is usually qualified by the applications; it could be HPL, one of the NAS benchmark suite kernels, or an actual application. The absence of any qualifying benchmark or application usually assumes HPL. In general, FLOPS uses in an HPC context are not ambiguous.

HPC has had the luxury of talking about performance using our metrics. We are not the only community that likes to boast about performance using FLOPS. We should, however, be clear in what we mean when we use FLOPS to describe performance. Including data like precision, application type, and achieved performance helps move the HPC-FLOPS needle in the right direction.