Oct. 11, 2024 — Giga Computing, a subsidiary of GIGABYTE and an industry leader in generative AI servers and advanced cooling technologies, has announced support for AMD EPYC 9005 Series processors with the release of new GIGABYTE servers alongside BIOS updates for some existing GIGABYTE servers using the SP5 platform.

This first wave of updates supports over 60 servers and motherboards that customers can choose from that deliver exceptional performance for 5th Generation AMD EPYC processors. In addition, with the launch of the AMD Instinct MI325X accelerator, a newly designed GIGABYTE server was created, and it will be showcased at SC24 (Nov. 19-21) in Atlanta.

New GIGABYTE Servers and Updates

To fill in all possible workload scenarios, using modular design servers to edge servers to enterprise-grade motherboards, these new solutions will ship already supporting AMD EPYC 9005 Series processors. The XV23-ZX0 is one of the many new solutions and it is notable for its modularized server design using two AMD EPYC 9005 processors and supporting up to four GPUs and three additional FHFL slots. It also has 2+2 redundant power supplies on the front-side for ease of access.

To support the incredible number of AMD EPYC 9004 Series CPU-based servers and motherboards in the GIGABYTE portfolio, two waves of updates will roll out in support of AMD EPYC 9005 CPUs, covering most models. To determine if an existing server supports 5th Gen AMD EPYC CPUs, GIGABYTE product pages will identify this in their description, and if so, there will be a BIOS update available to download for that product. If not, then EPYC 9005 will not be supported and only EPYC 9004 is listed on the description. Because of the generational difference in memory speeds for one DIMM per channel configurations, customers must check the product page to determine whether 6000 MT/s is supported.

Technological Advances with AMD

AMD EPYC 9005 Series processors are the latest in the EPYC family, featuring the “Zen5” architecture, offering a wide range of core counts (up to 192 cores and 384 threads), frequencies (up to 5 GHz), cache capacities, and more. Designed for AI-enabled data centers, these processors provide leading performance, efficiency, and x86 software compatibility. They support applications from corporate AI-enablement initiatives to large-scale cloud-based infrastructures to hosting business-critical applications, improving energy efficiency and reducing the data center sprawl. AMD EPYC 9005 Series CPU-based servers provide a seamless path for businesses looking to lead in compute and AI solutions.

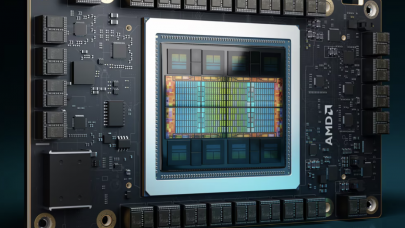

The AMD Instinct MI325X accelerators set new standards in AI performance with AMD CDNA 3 architecture, delivering incredible performance and efficiency for training and inference. With industry-leading memory and 6 TB/s bandwidth, they optimize performance and help reduce system TCO. Integrated with AMD ROCm software, new AMD accelerators support key AI frameworks and simplify deployment. AMD Instinct MI325X accelerators will also offer seamless integration within GIGABYTE infrastructure, as well as including strong Day 0 support for ecosystem partners like OpenAI, PyTorch, Hugging Face, Databricks, Lamini and many more, helping enterprises scale efficiently.

“Using the same socket to support multiple generations of AMD EPYC processors is quite impressive, considering the boost in compute performance that we have seen with EPYC 9005 Series CPUs,” said Vincent Wang, VP of Sales at Giga Computing. “As these technological advancements such as the AMD Instinct MI325X continue to push boundaries in compute in AI and more, we appreciate the relationship we have with AMD that allows us to echo our updates at the same time as AMD’s announcement.”

“AMD is the data center solutions provider of choice for leading organizations, whether they are enabling corporate AI initiatives, building large-scale cloud deployments, or hosting demanding business applications on-premises,” said Ravi Kuppuswamy, senior vice president, Server Business Unit, AMD. “Our latest 5th Gen AMD EPYC CPUs provide the performance, flexibility and reliability – with compatibility across the data center ecosystem – to deliver tailored solutions that meet the unique demands of the modern data center.”

“AMD is leading the AI revolution, setting new benchmarks in generative AI performance and capabilities with our Instinct accelerators,” said Travis Karr, corporate vice president, Business Development, AMD. “Our latest Instinct MI325X accelerators, built on CDNA3 architecture and featuring an industry-leading 256GB of HBM3E memory, enable organizations to efficiently scale their most complex AI workloads, driving unprecedented advancements in natural language processing, computer vision, and beyond.”

Today, at Advancing AI 2024, GIGABYTE showcases its newly released, and only known server with 48 DIMMs on a single node, R283-ZK0 “zig zag” server. And showing the new 8U server design concept, a revamped 8-GPU baseboard server for the AMD Instinct MI325X GPU is also at the event to show a needed solution for large language models and AI inference.

Visit GIGABYTE solution pages for AMD EPYC 9005 and AMD Instinct MI300 Series.

About Giga Computing

Giga Computing Technology is an industry innovator and leader in the enterprise computing market. Having spun off from GIGABYTE, we maintain hardware expertise in manufacturing and product design, while operating as a standalone business that can drive more investment into core competencies. We offer a complete product portfolio that addresses all workloads from the data center to edge including traditional and emerging workloads in HPC and AI to data analytics, 5G/edge, cloud computing, and more. Our longstanding partnerships with key technology leaders ensure that our new products will be the most advanced and launch with new partner platforms. Our systems embody performance, security, scalability, and sustainability. To find out more, visit https://www.gigacomputing.com.

Source: GIGABYTE