ELMSFORD, N.Y., Sept. 27, 2023 — SEEQC announced today that it is working toward the first all-digital, ultra-low-latency chip-to-chip link between quantum computers and GPUs, compatible with any quantum computing system, in collaboration with NVIDIA.

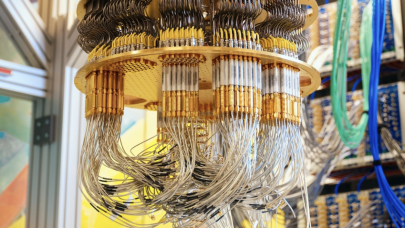

When completed, this will be the first time an active multi-chip module quantum processor will be directly linked with both a GPU- and a CPU-operational platform, creating a tightly coupled, fully digital integration of quantum and classical computing technologies. As SEEQC’s technology is entirely digital, it will remove several analog steps and expensive, bulky and noise-contributing hardware overheads in quantum processing, severely cutting down the bandwidth needed, decreasing latency bottlenecks and unlocking scalable enterprise applications in the future.

When completed, this will be the first time an active multi-chip module quantum processor will be directly linked with both a GPU- and a CPU-operational platform, creating a tightly coupled, fully digital integration of quantum and classical computing technologies. As SEEQC’s technology is entirely digital, it will remove several analog steps and expensive, bulky and noise-contributing hardware overheads in quantum processing, severely cutting down the bandwidth needed, decreasing latency bottlenecks and unlocking scalable enterprise applications in the future.

SEEQC is the only company in the world creating a fully digital chip-based quantum computing technology that spans all quantum technologies. Through the adoption of the NVIDIA platform, SEEQC will bring a new level of high-speed, low-noise, low-latency quantum computing to enable functional quantum computing at scale.

Integrating Classical and Quantum Computing to Meet Explosive Demand for AI

This pioneering integration will support research and applications in practical, real-world implementations such as quantum artificial intelligence (AI), quantum machine learning (ML) and other datacenter-scale applications to meet the increasing needs of compute- and data-intensive enterprise AI.

“This all-digital integration will take advantage of each system for a low-latency interface while maintaining the highest possible bandwidth performance from each individual system,” said John Levy, CEO and co-founder of SEEQC. “The development we’re taking on with NVIDIA represents the best of breed in both quantum and classical; and, together, both core technologies create unprecedented compute power.”

Integrating quantum and GPUs will advance the NVIDIA CUDA Quantum platform for hybrid quantum-classical computing. This collaboration is a major milestone in SEEQC’s technology roadmap. With GPU acceleration and a software platform that is unique to each industry, SEEQC will now be able to develop a full-stack quantum computing architecture that can enable hybrid quantum AI and ML programs, as well as scalable real-time error correction — all powered by SEEQC’s proprietary Single Flux Quantum (SFQ) technology.

A Technology-Compatible Innovation, No Matter the Quantum System

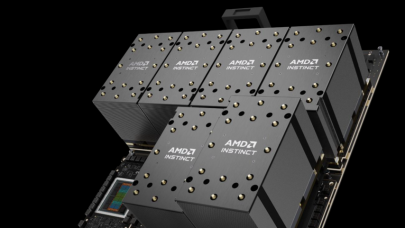

“Tight integration of quantum with GPU supercomputing is essential for progress toward useful quantum computing,” said Tim Costa, Director, HPC and Quantum Computing Product at NVIDIA. “Coupling the NVIDIA GH200 Grace Hopper Superchip with SEEQC’s digital chip architecture — tied together by the CUDA Quantum programming model — will provide a major step toward that goal.”

“With this collaboration, SEEQC and NVIDIA are paving the way for the enterprise-grade quantum computing era,” said Jean-François Bobier, Quantum Computing research lead for Boston Consulting Group (BCG). “A one-hour computation with high speed and low latency turns into 40 days on slower quantum architectures. Therefore, low latency is the key to scalable applications and error correction, which will unlock 90% of quantum computing use cases in value.”

Scalable, Heterogeneous Quantum Computing

The ultra-low-latency, digital chip-to-chip integration of a fully chip-based quantum processor to the Grace Hopper Superchip will deliver a powerful, scalable heterogeneous computer platform. This allows SEEQC to use NVIDIA’s digital architecture seamlessly with its own, advancing its capabilities for operations and processing algorithms.

“Scalable Energy-Efficient Quantum Computing is what SEEQC stands for; it’s a company well known to quantum experts. It will be the first to bring a ubiquitous, enterprise-grade quantum computing solution to market: a chip-based, full-stack QPU with high error correction potential, available across major quantum computing architectures,” said André M. König, CEO of Global Quantum Intelligence. “It will enable end users and application developers for the first time to leverage the power of quantum across a wide range of fields — all at the scale of NVIDIA and fully integrated with the best-of-breed classical stack.”

“We are connecting quantum and classical computing in a highly efficient way, taking advantage of a tight integration of both technologies to power systems that will run the most important quantum and classical applications,” said Matthew Hutchings, CPO and co-founder of SEEQC. “The only way to do this and accommodate high speed, low latency and high bandwidth is to do so on a chip-to-chip basis. This collaboration creates not just the possibility for scalable, real-time error correction in the near-term, but future quantum applications, many of which are unimaginable today.”

For more information on SEEQC’s fully digital quantum computing technology, visit seeqc.com.

ABOUT SEEQC

SEEQC designs and manufactures superconducting digital chips, firmware and software for scalable, energy efficient quantum computing systems based on its proprietary Single Flux Quantum (SFQ) chips produced at the company’s multi-layer superconductive electronics chip foundry located in Elmsford, NY. This chip-based architecture is designed to increase performance while reducing quantum requirements, complexity, cost and latency. SEEQC’s chip-based solution is augmented by the company’s firmware and software that supports a full spectrum of applications for third party developers. SEEQC integrates its chip-based solution with GPU and CPU data centers and offers its solution to all quantum computing systems developers including all quantum modalities. SEEQC is based in Elmsford, NY with teams in London, UK and Naples, Italy.

Source: SEEQC